Artificial Intelligence is changing the way we live, learn, and work. It powers medical tools, smart classrooms, and intelligent systems that shape decisions every day. As this technology grows stronger, it also raises important questions about responsibility and fairness. The topic of the Ethics of Artificial Intelligence has become one of the most discussed subjects in modern technology.

Understanding ethical challenges helps us make sure innovation benefits everyone. This article explains what AI ethics means, how global organizations like UNESCO guide responsible use, and how AI can remain fair, transparent, and human-centered.

What Are the Ethics of Artificial Intelligence

The Ethics of Artificial Intelligence are the moral rules and principles that guide how AI systems are built and used. These ethics help make sure that machines act in ways that respect human rights, fairness, and accountability.

In simple terms, AI ethics asks:

- Is the technology fair and unbiased?

- Does it respect privacy and human dignity?

- Can we explain its decisions?

- Who is responsible if something goes wrong?

Ethical AI isn’t just about coding rules; it’s about building trust between humans and technology.

Why AI Ethics Matters

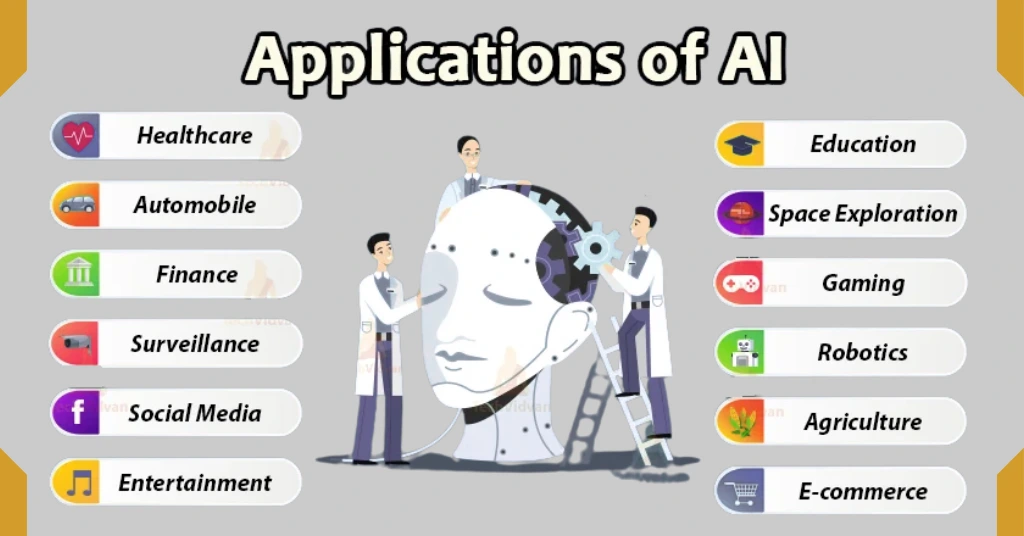

AI now affects many areas of life, such as healthcare, education, hiring, and even law. If the data used to train AI is biased, the system might make unfair decisions. For example, facial recognition tools can perform poorly on darker skin tones, and automated hiring systems can favor male candidates due to biased data.

Without clear ethical rules, AI might increase inequality instead of reducing it. That is why researchers, companies, and governments are now creating AI governance policies and ethical frameworks to make sure AI is used responsibly.

Core Principles of Ethical AI

Many global organizations, including UNESCO, have defined key principles for the ethical use of AI. These principles help guide how technology should be designed and managed.

- Human Rights and Dignity

AI should protect the rights and dignity of all people. It must respect privacy, freedom, and equality.

- Fairness and Non-Discrimination

AI systems should treat everyone equally and avoid reinforcing social or cultural bias.

- Transparency and Explainability

People should understand how AI systems make decisions. Clear explanations build trust and accountability.

- Safety and Security

AI should be tested to prevent harm and kept secure from misuse or attacks.

- Accountability

Humans must always be responsible for AI decisions and their results.

- Environmental and Social Wellbeing

Ethical AI should also consider energy use and promote sustainable technology.

Ethical Concerns in Different Sectors

1. AI in Education

AI can personalize lessons and help teachers understand student progress. However, it also brings challenges such as protecting student data and avoiding profiling. Ethical AI in education ensures that learning tools are fair, transparent, and respectful of privacy.

2. AI in Healthcare

AI supports doctors with diagnoses and predictions, but it must be used with care. Patient consent, data protection, and algorithm accuracy are major concerns. A biased system could harm patients, so regular testing and oversight are critical.

3. AI in Business and Marketing

AI tools 2025 improve marketing and automation, but they must not manipulate users. Responsible companies follow privacy laws and focus on honest communication.

4. AI and Human Rights

AI systems influence access to jobs, welfare, and justice. Ethical AI must never discriminate based on race, gender, or background. Every person deserves fair treatment and equal opportunity.

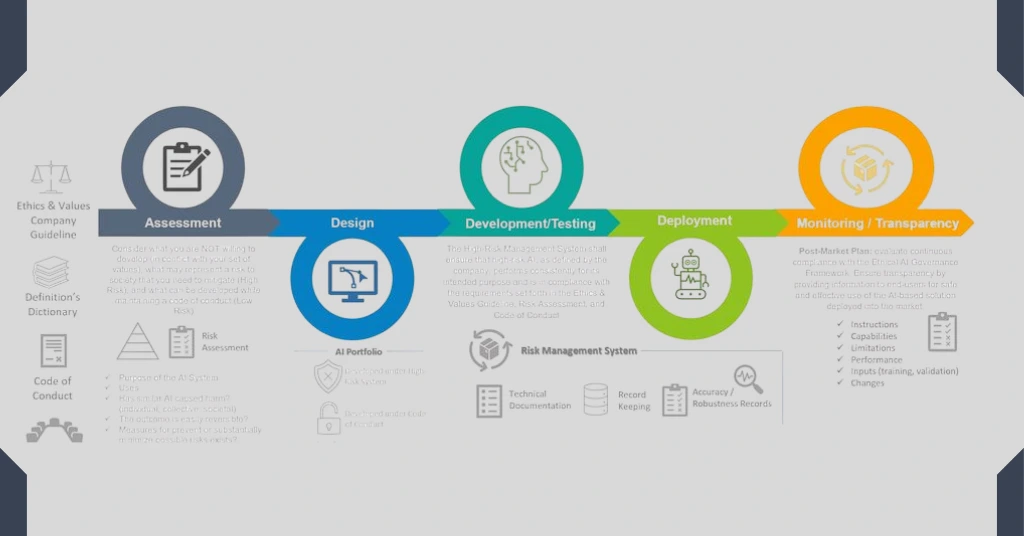

Responsible AI and Governance Frameworks

Ethical AI is not only about good values. It also depends on strong policies that guide how technology is developed and monitored.

- UNESCO’s Recommendation on the Ethics of AI (2021): This is the first global standard that promotes human rights, transparency, and sustainability.

- OECD AI Principles: These guidelines encourage inclusive growth, fairness, and responsible innovation.

- EU AI Act: This is the first major law to regulate high-risk AI systems and ensure that companies use them safely.

- Responsible AI Institute: Provides certification and best practices for organizations adopting AI.

Together, these frameworks form the base of AI governance, which means creating and enforcing rules that make AI more accountable and transparent.

How to Use AI Ethically as a Student or Professional

Ethical AI applies to everyone, not just tech experts. Whether you are a student, teacher, or business professional, there are a few simple steps to use AI responsibly.

- Verify Data Sources: Always question where data comes from.

- Acknowledge AI Limitations: Avoid relying completely on AI tools for decision-making.

- Ensure Transparency: Explain when and how AI tools are used in research or content creation.

- Respect Privacy: Do not share sensitive data without consent.

- Promote Fair Use: Use AI to assist creativity and learning, not to replace honesty or integrity.

AI and the Future of Ethics

The future of AI will depend on how well societies balance innovation with morality. As AI continues to evolve, the need for clear ethical guidelines will become even stronger. Self-driving cars, humanoid robots, and advanced automation will all require systems that prioritize human safety and values.

The goal is not to limit technology but to make it more human-focused. Ethical AI will allow progress to continue in a way that respects people, communities, and the environment.

Frequently Asked Questions (FAQs)

1. What are the main ethical issues in artificial intelligence?

The main ethical issues in artificial intelligence include bias, lack of transparency, privacy concerns, and accountability. These problems can lead to unfair or unsafe outcomes if not managed correctly.

2. Why is the ethics of artificial intelligence important?

Ethics in AI ensures that technology promotes fairness, protects human rights, and prevents harm. It helps people trust AI and encourages responsible innovation across industries.

3. How can AI be used ethically in everyday life?

AI can be used ethically by being open about its use, protecting data, and avoiding bias. Both individuals and organizations should follow responsible guidelines to make sure AI supports fairness and safety.

Conclusion

The Ethics of Artificial Intelligence is not a barrier to progress but a pathway to a better future. Ethical principles ensure that innovation stays fair, transparent, and respectful to humanity. By following global frameworks and responsible practices, we can create AI that improves lives while protecting values.

Smart technology should always work for people, not against them. The more we focus on ethics and responsibility, the closer we move toward a digital world that truly benefits everyone.